River Management On a Changing Planet

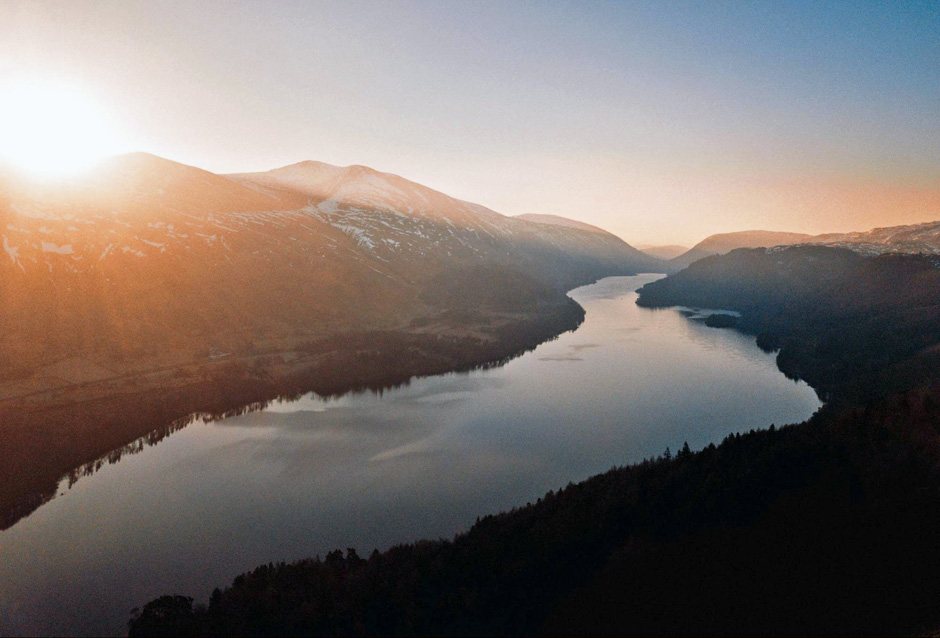

As the world's rivers face growing threats, new river management techniques are more important. (Credit: Photo by Jack Anstey on Unsplash)

As the world's rivers face growing threats, new river management techniques are more important. (Credit: Photo by Jack Anstey on Unsplash)River management is inherently complex, demanding mastery of constantly dynamic conditions even when the climate is stable. As the climate changes, however, river management will become even more difficult and unpredictable—and old models and techniques are likely to fail more often.

Now, researchers from around the world are calling for attention and change to how we manage and model the rivers of the world. Dr. Jonathan Tonkin, a Rutherford Discovery Fellow at New Zealand’s University of Canterbury, spoke to EM about why he is arguing that current tools for river management are no longer enough as even historical baseline river ecosystem conditions themselves are changing.

Dr. Tonkin began exploring the applicability of adaptive management and process-based models together in 2017.

“I was working on a DoD funded project led by Dave Lytle, Julian Olden and Dave Merritt,” explains Dr. Tonkin. “We were developing the models we are calling for during that time in an effort to forecast riverine biodiversity responses to climate change. However, in 2018, I pulled in a few other people from the US and Australia to meet at the annual Society for Freshwater Sciences conference in Detroit. That’s when the full effort really kicked off.”

Tonkin and his team delineate a new approach in their recent paper that consists of two components: developing mechanistic/process-based models that link fundamental species biology with river flow regimes, and adaptive management that adjusts the approach and management as new information is made available. The idea is to forge past simple ecosystem monitoring toward deeper insights into underlying biological mechanisms.

“These two approaches combine well as tools for better management under high levels of uncertainty,” details Dr. Tonkin. “The four next steps are really what we consider as key steps to move forward in this approach.”

A four-step process for river management

Dr. Tonkin and his team provide a breakdown of the four steps designed to provide a more comprehensive approach to river management in the face of uncertainty caused by climate change:

- Collect data on mechanisms;

- Describe key processes in models;

- Focus management on bottlenecks; and

- Pinpoint uncertainty.

Collecting high-quality data on mechanisms is essential to the process.

“These data are often what restricts the development of the models we’re advocating for,” Dr. Tonkin describes. “It’s much more costly and time-consuming to collect these data than it is to monitor the abundance of a population through time, for instance. But, it’s essential data to parameterize the models we are calling for.”

Even the best models rely on solid data on natural mechanisms, which are required to feed into a process-based model.

“Otherwise, we have to rely on models that correlate states with states—population abundance with mean annual river flow, for example,” Dr. Tonkin says. “These relationships can be uncovered by a process-based model, but not vice versa. That is, mechanistic models allow us to understand how the state of a population came to be through a variety of different mechanisms.

Calling for a description of key processes in models means better articulating how riverine populations respond to widely varying hydrological conditions. “This will help have a better understanding of how species will respond to novel flow conditions, which will arrive as the climate changes,” adds Dr. Tonkin.

Focusing management on bottlenecks allows managers to place their attention, effort, and budget where it is most needed.

“Here, we’re advocating for attention to be placed on key life stages, rather than simply on overall population abundance,” remarks Dr. Tonkin. “Demographic population models are key tools in the arsenal here.”

Both classic and modern river monitoring technologies can and should be deployed with new models.(Credit: Don Becker, USGS) [Public domain]

“In order to make decisions, like how much water to hold back or release from a dam, managers need to know the level of uncertainty associated with a particular prediction,” adds Dr. Tonkin.

One of the strengths of a process-based model is that it can in some senses be tailored to elicit the data you’re looking for, but that’s not the whole story.

“They’re also pretty data-hungry, they require more specific information more than correlative approaches do,” says Dr. Tonkin. “However, they don’t necessarily need the long time-series data that is necessary for a robust, statistically-driven approach. There are hybrid approaches that can estimate processes from time-series data.”

However, the team hopes to emphasize the true strength of process-based approaches: their ability to help scientists and managers see beyond conditions that they have observed in the past. This will enable better forecasting of the effects of flow regimes—even those that are entirely novel, such as those produced by climate change or dam operations.

The use of this multi-pronged approach also allows for better tracking power.

“By combining multiple processes/mechanisms, predictions are not limited to the emergent states that they combine to predict,” explains Dr. Tonkin. “The mechanisms can be tracked as well. For instance, it is possible to identify the mechanisms driving collapse of a population, such as increased flood-induced mortality or decreased recruitment of new individuals.”

Finally, sensitivity analyses allow researchers to identify the information that is lacking on the biology of species, which may be leading to poor predictive performance. Faster access to more sensitive data that fuels more accurate models, ideally, means responding to ecological tipping points and bottlenecks more rapidly—critical times for every ecosystem.

“Tipping points are important simply due to the fact that they can lead to a complete change (collapse) in the state of a system/population/community etc. in response to a minor change in the environment,” remarks Dr. Tonkin. “For instance, moving from one major flood every five years to one every six years may be enough to lead to population collapse of a riparian plant.”

Bottlenecks hold just as much significance, and both of these issues are better understood with process-based models.

“Bottlenecks are important as a single stage of a species’ life cycle may be the limiting element to their persistence,” Dr. Tonkin says. “For instance, it may be the egg stage that is most vulnerable to droughts, so effort should be placed on altering flows to improve egg survival.”

Adaptive river management and embracing uncertainty

Adaptive management succeeds in that it embraces the change that is coming and helps stakeholders learn by doing to establish better management goals. There are so many types of uncertainty to quantify, but over time, scientists can work to iteratively reduce uncertainty in both their data and models.

“Adaptive management is a tool we use in situations where uncertainty is really high,” remarks Dr. Tonkin. “Climate change is producing novel environmental conditions, so by default, the uncertainty is high—there’s no historical context to base decisions on. Rather than taking uncertainty out of the process, adaptive management is a tool to use that embraces uncertainty. Our argument is that process-based models can be used to reduce uncertainty for decision-makers by providing robust forecasts into novel conditions. So the two approaches can be combined for the best results.”

Obviously, too, a process-based model using the best quality data available provides a future-facing approach.

“Climate change is amplifying the pressures on river ecosystems already imposed by urbanization, invasive species, pollution and more,” remarks Dr. Tonkin. “This is leading to a rapid loss of freshwater biodiversity (see http://www.livingplanetindex.org). We need to adopt modeling and management tools that embrace uncertainty and look to the future rather than looking to the past. Free-flowing, healthy, functioning rivers are truly a thing of beauty! The diversity of life they support is immense. The free services they provide to humanity are priceless. We must do all we can to protect them.”

Sara

June 9, 2023 at 9:46 AM

Wow, some really effective points on river management. Seeing this climate change and how disaster events are occurring more often than historically predicted these are the steps in the right direction. Thanks for sharing the article.

Pingback: Discover the Secrets of the Riverine Lifestyle - The Planet Digest